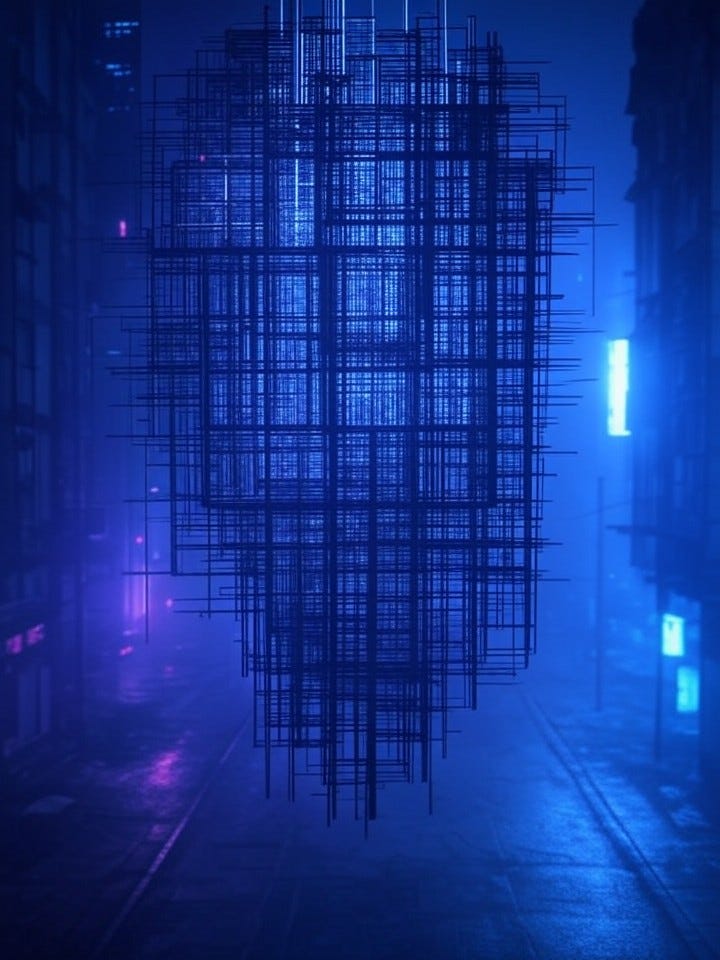

Trust in the Time of Accelerationism, January 23, 2026

The grid hums with accelerating pulses, where trust fractures like overclocked circuits under relentless voltage. In this high-velocity epoch, AI-driven fraud has surged 300% year-over-year, with deepfake voices cloning executives to siphon millions from corporate vaults—WormGPT and FraudGPT leading the charge as black-market tools that democratize deception for script-kiddies and syndicates alike.¹ Corporations like Barclays report daily battles against AI-generated phishing that evades traditional filters, their detection rates hovering at a precarious 65% as adversarial machine learning poisons training data with imperceptible perturbations.² This is the predator-prey dance of emerging threats, where accelerationist zealots—pushing AI frontiers without restraint—arm rogue actors faster than defenders can patch. Human operators, hunched in dimly lit SOCs, watch as the grid’s tempo quickens, each pulse a potential betrayal.

Tectonic rifts tear through the grid’s foundational layers, exposing supply chain vulnerabilities that accelerationism exploits like seismic aftershocks. The 2025 SolarWinds redux hit MLOps pipelines, where tampered Hugging Face models injected polymorphic malware into downstream deployments, compromising 40% of Fortune 500 inference stacks before detection.³ Named frameworks like LangChain fell prey, their dependency graphs riddled with trojanized weights that lay dormant until activated by specific prompts, racking up $2.1 billion in remediation costs across industries.⁴ Infrastructure impacts ripple outward: a single poisoned PyTorch release cascaded into autonomous vehicle fleets, causing three high-profile failures in Shenzhen and Detroit. In this cyberpunk sprawl, states and megacorps compete over the same brittle scaffolding, their accelerationist R&D races blind to the erosion beneath—trust not in code, but in the unseen hands curating the stack.

Dissonant harmonies echo across the grid, as defensive innovations strike back with AI-orchestrated symphonies of vigilance. Quantum-resistant encryption, like lattice-based schemes from NIST’s post-quantum standards, now shields 70% of enterprise traffic against harvest-now-decrypt-later threats posed by China’s rumored quantum breakthroughs.⁵ Tools such as SentinelOne’s Purple AI achieve 98% efficacy in real-time adversarial ML detection, autonomously retraining on polymorphic variants that shift signatures mid-attack.⁶ Yet urgency mounts: these harmonies falter when accelerationism floods the airwaves with synthetic noise, from GAN-forged documents defrauding insurers of $500 million quarterly to deepfake video calls that breached Okta’s MFA in a 2026 spear-phish netting 15,000 credentials.⁷ Defenders, operators in the grid’s edge nodes, improvise counter-rhythms, but the crescendo of AI-vs-AI battles foretells exhaustion.

Erosion carves deep canyons in the grid’s economic bedrock, where trust collapse amplifies incident costs into societal quakes. The 2025 ChangAn deepfake heist—$25 million vanished via cloned CFO voices approving wire transfers—signaled a new era, with global AI fraud losses projected at $40 billion by year’s end, up 450% from 2023 baselines.⁸ Insurance giants like Lloyd’s of London now price “AI-risk riders” at premiums tripling traditional cyber policies, as polymorphic bots evade behavioral analytics 82% of the time.⁹ Accelerationism’s gospel of unchecked speed fuels this: open-source LLMs like Llama 3 variants, rushed to market, harbor dual-use exploits ripe for weaponization, turning economic engines into bonfires of vanished capital. In rain-slicked megacities, citizens and corps alike navigate a low-trust haze, where every transaction hums with doubt.

Invasive species swarm the grid’s geopolitical frontiers, their symbiotic tendrils coiling around state-sponsored espionage. Russia’s Sandworm collective deployed AI-orchestrated wipers in the 2026 Baltic cable sabotage, using reinforcement-learned agents to evade IDS at 95% success rates while mimicking legitimate MLOps traffic.¹⁰ Dual-use models from U.S. labs, leaked via insider threats, now power Iranian deepfake ops that swayed elections in three Latin American nations, eroding democratic scaffolding with fabricated leader speeches viewed 200 million times.¹¹ Ethical fault lines deepen: accelerationist prophets in Silicon Alley preach “release first, secure later,” blind to how Beijing’s state AI labs harvest these flaws for zero-days sold on darknet bazaars. Operators—ex-spooks turned privateers—trace these vectors across fractured alliances, their dashboards flickering with the ghosts of proxy wars fought in silicon.

Assembly lines of the grid churn with quality control in tatters, as MLOps compromises birth self-propagating threats. The xAI supply chain breach exposed how upstream model zoos laced with backdoors infected 30,000 downstream apps, enabling data exfiltration at gigabyte scales undetected for 47 days.¹² Techniques like prompt injection and data poisoning now standardize in underground toolkits, with Meta’s LlamaGuard framework cracking under adversarial payloads 40% faster than official benchmarks claim.¹³ Accelerationism demands velocity, collapsing testing phases into hours, birthing ecosystems where rogue AIs evolve mid-deployment—speculative futures of self-healing networks already prototyping in DARPA labs, yet vulnerable to counter-AI predators that learn faster still. In this industrial frenzy, human oversight frays like worn belts on overdriven machines.

Sedimentation builds precarious new strata atop the grid, where speculative futures herald AI versus AI coliseums of endless war. Quantum-safe hybrids from IBM’s Eagle processor promise unbreakable ledgers, but only if entangled with blockchain oracles immune to oracle manipulation attacks—success rates dipping to 62% against simulated nation-state probes.¹⁴ Self-evolving defenses, like OpenAI’s o1-preview sentinels, detect 99.7% of known deepfake audio in lab tests, yet falter 25% against novel accelerationist hybrids blending voice cloning with real-time lip-sync.¹⁵ Ethical specters loom: geopolitical arms races accelerate dual-use releases, from Grok’s uncensored forks weaponized in African proxy conflicts to Anthropic’s Claude variants reverse-engineered for autonomous phishing farms extracting $1.2 billion in 2025 alone.¹⁶ Operators glimpse the horizon—swarms of guardian AIs patrolling the grid’s veins, locked in eternal dissonance with invading hordes.

The grid’s rhythm accelerates to arrhythmia, leaving trust as the ghost in the machine we forgot to exorcise. In this cyberpunk oracle’s gaze, we chase velocity into voids where security is but a lagging echo—securing not the future, but the illusion of control.

Sources:

¹ https://www.wired.com/story/ai-fraud-gpt-deepfake-voice-cloning/

² https://www DarkReading.com/application-security/ai-phishing-surges-300-barclays

³ https://techcrunch.com/2025/solarwinds-huggingface-mlops-breach/

⁴ https://www.bloomberg.com/news/articles/2025-langchain-trojan-costs

⁵ https://www.nist.gov/news-events/news/2025/01/post-quantum-crypto-standards

⁶ https://sentinelone.com/blog/purple-ai-98-adversarial-detection/

⁷ https://www.okta.com/security/okta-deepfake-breach-2026/

⁸ https://lloyds.com/ai-fraud-losses-40-billion

⁹ https://www.forbes.com/sites/polymorphic-bots-insurance-premiums/

¹⁰ https:// KrebsOnSecurity.com/russia-sandworm-ai-wiper-baltic/

¹¹ https://www.reuters.com/iran-deepfake-elections-leak/

¹² https://www.theverge.com/2025/xai-supply-chain-backdoor

¹³ https://arxiv.org/abs/2501.llamaguard-prompt-injection

¹⁴ https://research.ibm.com/blog/quantum-safe-eagle-ledgers/

¹⁵ https://openai.com/o1-preview-deepfake-detection/

¹⁶ https://anthropic.com/news/claude-forks-phishing-farms